Autonomous driving technology has the potential to save many more lives than it takes. But that may not matter if the public becomes convinced that autonomous vehicles are a danger to society.

Will the death of a pedestrian in Tempe, Arizona derail the self-driving car initiatives of firms like Uber, General Motors and Tesla? The answer greatly depends on the public’s perception of the risks posed by autonomous vehicles – something that the fatal accident is unlikely to help.

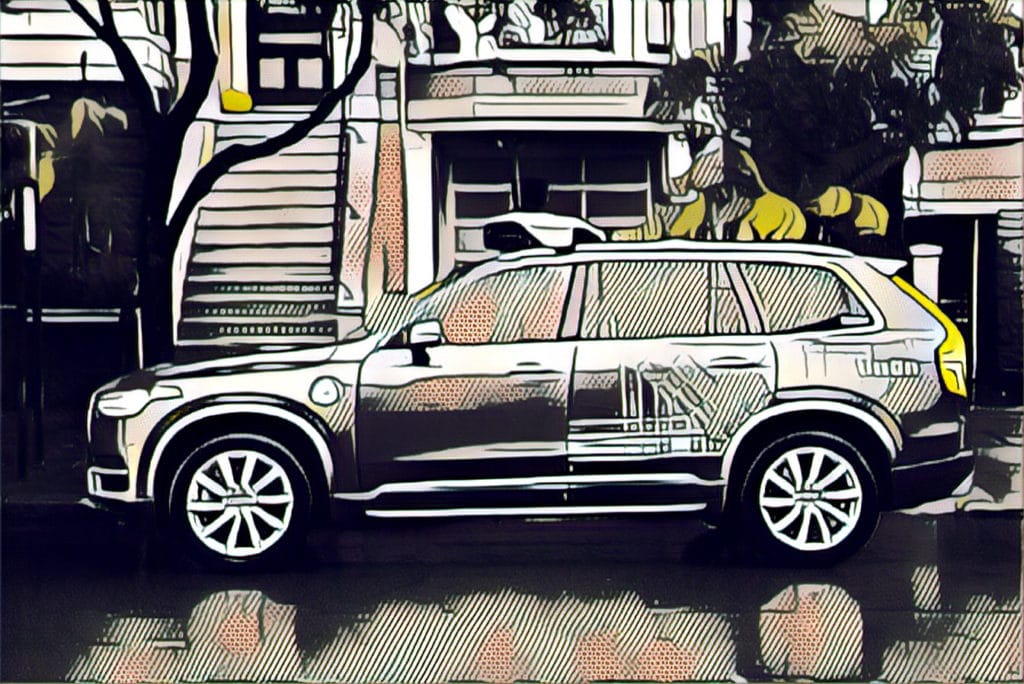

That’s one conclusion of security experts after 49-year-old Elaine Herzberg was struck and killed by a self-driving Uber Volvo XC90 SUV as she walked her bicycle across a Tempe street Sunday night. Within hours, Uber responded by pulling autonomous vehicles from the streets of Tempe, Pittsburgh, Toronto and other cities where they are operating as the authorities and the company investigate the Tempe incident. It’s CEO, Dara Khosrowshahi issued a statement of condolence for Herzberg’s family and promised cooperation with the police investigation.

Some incredibly sad news out of Arizona. We’re thinking of the victim’s family as we work with local law enforcement to understand what happened. https://t.co/cwTCVJjEuz

— dara khosrowshahi (@dkhos) March 19, 2018

But a bigger risk looms: the specter of growing public concern and anger prompting a backlash against autonomous vehicles in the name of safety. Herzberg’s death was the first known death of a pedestrian in the U.S. linked to a fully autonomous vehicle. In July, 2016, the driver of a Tesla was killed when his car collided with a semi while in hands-free driving “autopilot” mode.

Early and unofficial reports from law enforcement suggest that the autonomous vehicle, which had a human driver at the wheel, was not at fault in the incident. But the incident comes amid warnings and other cautionary statements from technology experts that automakers and officials are moving forward heedlessly by allowing autonomous vehicles to operate on public streets.

In 2016, a representative from the Association of Global Automakers, a trade group for the auto industry, warned officials from that National Highway Traffic Safety Administration (NHTSA) that the U.S. government needed to adopt a slower and more deliberative approach to allowing autonomous driving features on the roads.

“Procedural safeguards are in place for valid reasons,” Paul Scullion, safety manager at the Association of Global Automakers, said at a public meeting on self-driving cars hosted by NHTSA, according to an Associated Press report. “Working outside that process might allow the government to respond more quickly to rapidly changing technology, but that approach would likely come at the expense of thoroughness,” he said.

Others have been more blunt.

“There is no question that someone is going to die in this technology,” Duke University roboticist Missy Cummings said in testimony before the US Senate committee on commerce, science and transportation in 2016. “The question is when and what can we do to minimize that.”

In a report in 2017, the Cloud Security Alliance (CSA) warned that the ecosystem of connected vehicles is in full expansion, but car manufacturers and industry stakeholders still need to come up with a way to prevent unauthorized data transmissions between different components that interact with connected vehicles.

While a slow, deliberate approach to autonomous vehicle adoption may avoid mishaps or even deaths linked to the technology, the question of whether that is the best approach is complicated, says Beau Woods, the cyber safety innovation fellow with the Atlantic Council.

Government data suggests that U.S. roads are becoming more deadly, after decades of steady improvement in driver and vehicle safety. In 2016 there were 37,461 traffic fatalities in the US, a more than 15% increase from 2011 (PDF). The vast majority of those fatalities were attributable to human error– from speeding to distracted driving.

“Most if not all of those could have been avoided if we had something like autonomous vehicles,” Woods said, noting the growing use of driver assistance technologies. That type of calculation makes it understandable that policy makers want to move quickly to adopt autonomous driving technology, he said.

But Woods warns that automakers, technology firms and law makers need to be careful not to move more quickly than public trust merits. More incidents of an autonomous vehicle or a driver in auto pilot mode having a fatal accident could put a chill on the public’s support for the technology – even if data suggests that the technology will make roads safer over all.

While rapid prototyping and “failing fast” may be the mantra of Silicon Valley, that thinking doesn’t apply well when the consequences of failure are matters of life and death. “You can’t reboot life, so you have to be thoughtful and intentional about testing and developing autonomous vehicles,” he said.

Regulators and lawmakers should be mindful of the motives of companies like Uber, whose primary responsibility is to shareholders, not public safety. In recent decades, the interest of public safety and shareholders have tended to align, but that might not always be the case, Woods noted.

The conundrum is one common to emerging industries. The aviation industry went through a similar crisis in the period after World War I, when “barnstorming” demonstrations popularized planes and flying, but also created the public impression that flying was a risky and dangerous undertaking. The response from the U.S. government was the Air Commerce Act of 1926, which gave the federal government sweeping powers to ensure the safety of civil aviation. Under the Act, new rules were created that all but outlawed barnstorming and authorized the Department of Commerce to assess and certify the airworthiness of aircraft, test and license pilots, operate and maintain safe air fields and – importantly – to investigate crashes and other safety incidents. The results was a drastic reduction in flying related accidents and deaths.

Similar action may be needed with autonomous vehicles as a “backstop” to widespread failures that could undermine the whole industry, Woods said.

“These are really tough choices. I’m glad I’m not in the position of having to make those decisions!”

Pingback: Podcast Beta Deaths: are we driving too fast towards Autonomous Vehicles? | The Security Ledger

Spot on, Paul. I heard (but have never corroborated) that fear is why we had elevator operators early on. Even though the elevators were capable without operators, passengers were too fearful to ride without a human at the controls. Similar concept today, but clearly a more complex problem and solution.

Having driven the dark patch of Curry road in Tempe I believe this is a case of expecting more from autonomous driving technology than we would from a human which is unrealistic of it as this stage in it’s development. The pedestrian was jaywalking across a very dark stretch of the street and it also curves so your headlights wouldn’t illuminate a pedestrian until you were on them. It’s disappointing this is the “case study” being used to attack the driver-less car programs.

Pingback: Episode 96: State Elections Officials on Front Line against Russian Hackers | The Security Ledger