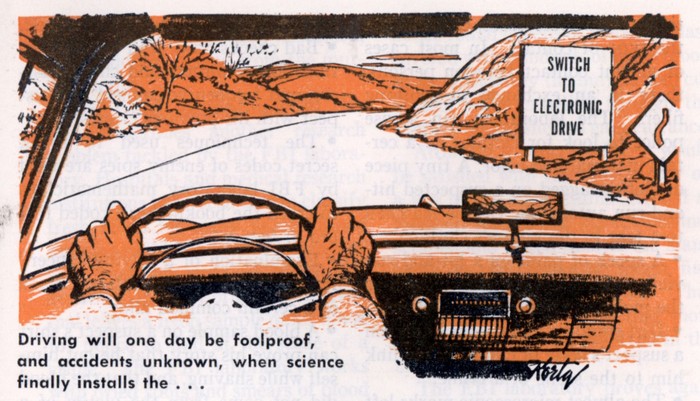

The folks over at The Atlantic have an intriguing take on the subject of “connected vehicles” and autonomous driving. Now this is a vision that we’ve been chasing for more than 50 years (consider all the technicolor “highway of tomorrow” films from the 50s and 60s). And we’re on the cusp of realizing it. Google’s self-driving car is racking up the miles and automated features like hands free cruise control and collision avoidance are making their way into production vehicles.

As Alexis Madrigal at The Atlantic’s (cool) CityLab writes, however, there’s one major fly in the ointment when you consider the super efficient, algorithmically driven road of the future: humans.

Specifically: Madrigal, in the course of writing an article on how to build an ‘unhackable’ car poses a scenario that I think is very likely: humans who subvert or otherwise game vehicle automation features to suit their own needs.

Imagining the orderly procession of precisely spaced, autonomous vehicles on the freeway of the future, Madrigal wonders: “What if one person jailbreaks her car, and tells her AI driver to go just a little faster than the other cars? As the aggressive car moves up on the other vehicles, their safety mechanisms kick in and they change lanes to get out of the way. It might make the overall efficiency of the transportation lower, but this one person would get ahead.”

| [Read Security Ledger coverage of connected vehicles here.] |

Given a single vehicle – that might not be a problem. But, humans being humans, that kind of aggressive or ‘divergent’ behavior is likely to be much more common – especially in a country like the U.S. where individualism is a strong cultural trait. For one thing, other car occupants, seeing another car zooming ahead, would seek the same ‘edge.’ As more jailbroken cars appear that can refuse to cooperate, the order soon breaks down.

Madrigal bases his article around the work of Ryan Gerdes of Utah State University. Gerdes received a $1.2 million grant from the National Science Foundation to look at the security of the autonomous vehicle future. He warns that automated driving – like other automated behavior – will only be as good as the algorithms that control the behavior of the individual vehicles.

“The designers of these systems essentially believe that all of the nodes or vehicles in the system want to cooperate, that they have the same goals,” Gerdes said. “What happens if you don’t follow the rules? In the academic theory that’s built up to prove things about this system, this hasn’t been considered.”

The possible problems are legion, Madrigal writes. Automated driving algorithms that are overly deterministic could actually amplify small disruptions caused by non-conforming vehicles in ways that our current, atomized driving culture doesn’t. Further: automated vehicles could be victims of a range of threats that simply don’t exist today: fantom obstacles in the roadway created by manipulating in-road on on-vehicle sensors.

It’s a fascinating discussion and well worth reading. Check it out via Building an Unhackable Autonomous Vehicle – CityLab.