Software used to remotely program implantable cardiac devices by a number of vendors is rife with exploitable software vulnerabilities that leave the devices vulnerable to attacks and compromise, according to a report by the firm Whitescope Inc.

The analysis of hardware and software associated with implantable cardiac devices spanned four, separate vendors and product families, but found a wide range of security weaknesses, among them the use of permanent (or “hardcoded”) authentication credentials like user names and passwords and the use of insecure communications, with one vendor transmitting patient data “in the clear.” All four product families were found to be highly susceptible to “reverse engineering” by a knowledgeable adversary, exposing design flaws that might then be exploited in remote or local attacks, researchers Billy Rios of Whitescope and Dr. Jonathan Butts wrote in their report.

The two researchers investigated a range of hardware and software tools that, together, make up the ecosystem of implantable cardiac devices. In addition to the implantable devices, Rios and Butts obtained and analyzed “physician programmers” that are used to configure and update implanted devices wirelessly, home monitoring system hardware and software and the patient support network. Their work follows an October, 2016 exemption to the Digital Millennium Copyright Act (DMCA) issued by the Librarian of Congress that permits security research on consumer devices – a contentious issue and one that most device manufacturers oppose. The two researchers shared a copy of their research with the National Health ISAC (Information Sharing and Analysis Center), they said in a blog post.

“The results of the holistic analysis help clarify the nature and scope of the threats facing the implantable cardiac device ecosystem and the potential impact to patient care. WhiteScope researchers are motivated by the prospect of enhancing cyber security for the medical device community in a manner that strengthens patient safety.”

The security of implantable medical devices has been the subject of controversy. In August, 2016, for example, the firm MedSec released a report on vulnerabilities in devices manufactured by St. Jude Medical. MedSec, working with Wall Street firm Muddy Waters, warned of a wide range of exploitable security holes in the company’s pacemakers, implantable cardioverter defibrillator (ICD), and cardiac resynchronization therapy (CRT) devices. A subsequent report by the U.S. Food and Drug Administration (FDA), released in April, found that St. Jude Medical knew about serious security flaws in its implantable medical devices as early as 2014, but failed to address them with software updates or other mitigations, or by replacing those devices.

The latest report, while omitting the names of specific products or vendors, finds similar evidence of lax security throughout implantable device ecosystems.

Across the 4 programmers built by 4 different vendors, we discovered over 8,000 vulnerabilities associated with outdated libraries and software in pacemaker programmers. — Billy Rios and Jonathan Butts Ph.D

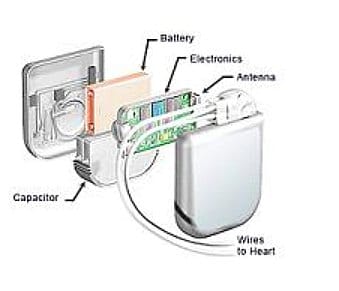

Modern, implantable medical devices like pacemakers are “‘system-of-systems’,” the researchers note. Pacemaker devices, programmers, home monitoring systems, and the supporting/update infrastructure all work in concert to provide care to the patient.

However, that complex ecosystem complicates security and management, the researchers found. “Despite efforts from the FDA to streamline routine cybersecurity updates, all programmers we examined had outdated software with known vulnerabilities. Across the 4 programmers built by 4 different vendors, we discovered over 8,000 vulnerabilities associated with outdated libraries and software in pacemaker programmers,” the researchers report.

Many of those flaws were similar across vendors, reflecting deep similarities between implantable defibrillators and other devices. “We suspect that some of this similarity is due to the technical restraints associated with implanted technologies. Other similarities, however, indicate that there is some cross-pollination between pacemaker manufacturers,” the researchers conclude.

Use of third-party hardware and software is rife in these medical devices. Across the four vendors, there was an average of 86 third-party components used in the implantable devices and 43 vulnerable third-party components. Per-device, the average number of known vulnerabilities in those third-party components was 2,166.

Among other things, home monitoring devices do not validate the source of firmware updates, creating the potential for a s0-called “man- in-the-middle attack” that could send counterfeit firmware to a home monitoring devices. In a related issue, none of the vendors studied digitally signed the firmware to ensure that it is official and to limit the ability of non-authorized firmware to run on devices.

In one case, Rios and Butts discovered unencrypted patient data including Social Security Numbers, patient names, phone numbers and medical data stored in a pacemaker programmer. The patient data belonged to a well-known hospital on the east coast and has been reported to the appropriate agency, the researchers said.

Software re-use and what the researchers refer to as “cross-pollination between vendors” appears to have exacerbated security woes. Rios and Butts note that all of the physician programmers they examined used the same “core application” to initialize the programmer hardware and interfaces, while communications to the implanted pacemakers is done through device-specific “apps.” But security around the programmers is notoriously weak. None of the four programmers studied by the Whitescope researchers required physicians to authenticate to the device first, nor did they observe the use of any cryptographically signed pacemaker firmware.

“Given pacemaker firmware are not cryptographically signed, it would be possible update the pacemaker device with a custom firmware. Once interaction with a pacemaker is complete, the pacemaker app passes control back to the main application and the main application will await an another inductive telemetry wand action,” they said.

The similarities between implantable device ecosystems holds the possibility that common fixes to the many problems discovered by the researchers may be possible, but the sheer number of flaws is a concern, said Rios, as is the move towards home based care.

Insecure home networks are even more prevalent than those at hospitals and doctor’s offices. That leaves vulnerable home health devices like monitoring systems susceptible to hacking and tampering – with possibly serious consequences, Rios warned.

Pingback: [Older] Code Blue: 8k Vulnerabilities in Software to manage Cardiac… | Dr. Roy Schestowitz (罗伊)