In-brief: Facebook said thousands of ads that ran on its site in 2015 and 2016 have links to Russian information operations. The ads were designed to foment discord around a range of issues.

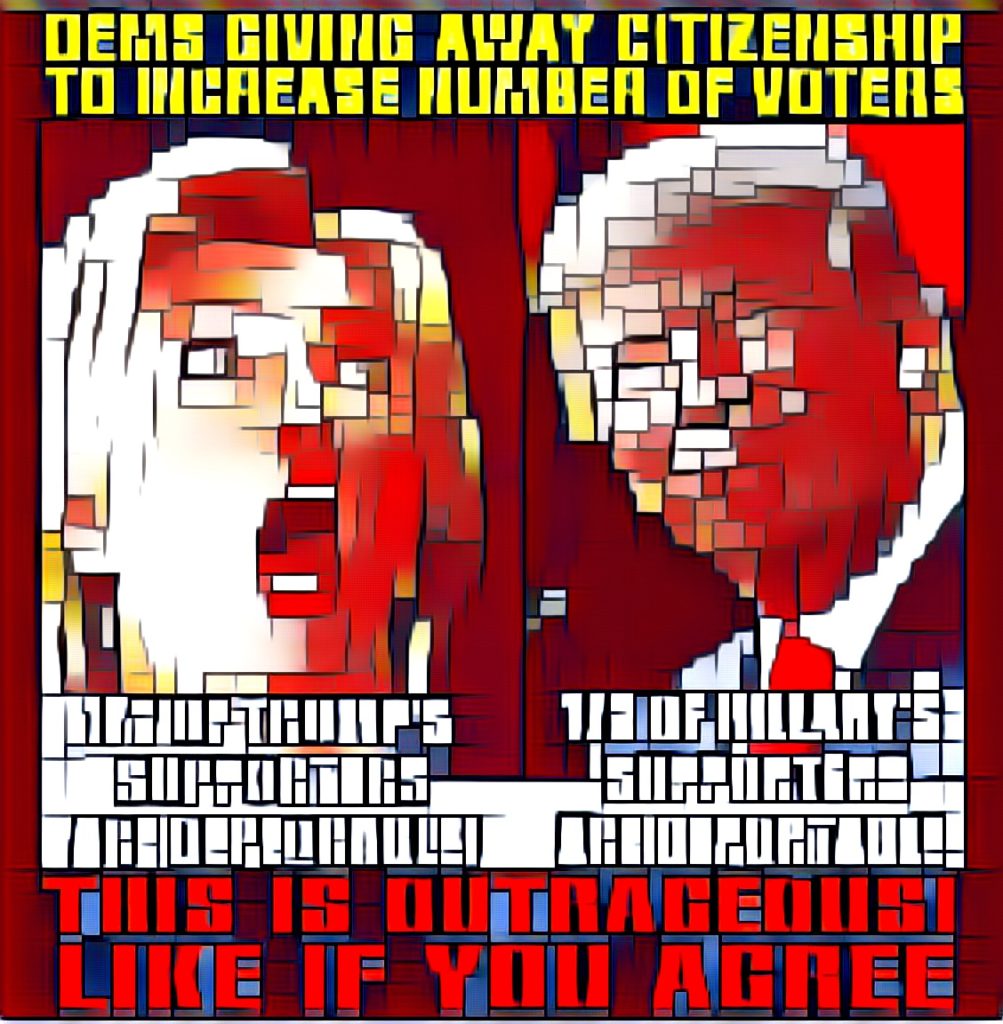

In the wake of the 2016 election and reports of widespread, online disinformation campaigns, the social media giant Facebook found itself in the crosshairs of public opinion: accused of promulgating rumors and “fake news” – not to mention racist, homophobic, misogynistic and anti-immigrant memes. While suspicion for the campaigns was directed at the government of Vladimir Putin of Russia.

Since that time, Facebook has been mostly silent on attribution for the online mischief in the lead up to the 2016 election. But this week, the company released the findings of an internal audit that seem to corroborate theories that Russian actors were behind a campaign of social media stories and memes designed to foment discord in the U.S.in the months leading up the November 2016 presidential vote. Facebook investigators identified around 3,000 advertisements that ran from June of 2015 to May of 2017 and were linked to fake accounts controlled from Russia that spent around $100,000 to run the campaign.

The ads promoted a range of issues including LGBT matters to race, illegal immigration and gun rights. While few directly promoted a candidate in the Presidential election, the ads seemed more intended “to amplify divisive messages,” wrote Facebook Chief Security Officer Alex Stamos on Wednesday.

The campaign was directed by about 470 inauthentic accounts and Pages that have since been deactivated. Facebook’s analysis suggests the accounts and Pages were affiliated with one another and likely operated out of Russia, Stamos said. From his post:

The vast majority of ads run by these accounts didn’t specifically reference the US presidential election, voting or a particular candidate. Rather, the ads and accounts appeared to focus on amplifying divisive social and political messages across the ideological spectrum — touching on topics from LGBT matters to race issues to immigration to gun rights. About one-quarter of these ads were geographically targeted, and of those, more ran in 2015 than 2016.

The behavior, Stamos said, fit into a pattern the company first discussed in a paper on information campaigns released in April. That paper identified three characteristics of information operations on the network: targeted data collection, content creation, and amplification of false narratives or incendiary topics. The Facebook ad campaigns fit squarely into what Facebook identified as “false amplification – a coordinated activity by inauthentic accounts with the intent of manipulating political discussion (e.g., by discouraging specific parties from participating in discussion or amplifying sensationalistic voices over others).

Facebook also expanded its initial inquiry to ads that had more tenuous ties to Russia. For example: the social media company flagged ads bought from accounts with US IP addresses but in which the language was set to Russian. Expanding the search that way identified another approximately $50,000 in potentially politically related ad spending on roughly 2,200 ads, Stamos wrote.

Facebook says it has shared the findings with US authorities investigating Russian tampering with the U.S. election and will continue working with them. The company also said it is improving automated systems to detect fraudulent account activity. For example, the company is using machine learning to help limit spam and reduce the posts people see that link to low-quality web pages. The social networking giant is also reducing stories from sources that post what it described as “clickbait headlines that withhold and exaggerate information.”

As for how effective the campaigns were and what, if any, role they played in the 2016 election, an analysis by The Daily Beast said that, if properly used, the $100,000 budget could garner between 23 million and 70 million impressions.

Facebook has not said how many users were reached with the ads, but Daily Beast notes that some of the posts associated with a suspected Russian trolling operation, SecureBorders received tens of thousands of likes and shares. Many of those posts were politically themed: attacking Democratic candidate Hillary Clinton while generally praising Republican Donald Trump.

But it is very likely that the ads started out independent of the Presidential election with the goal of simply polarizing the population, said Chris Sumner, a researcher and co-founder of The Online Privacy Foundation, which has researched how social media can be “weaponized.”

“Those sorts of ads could be used to create a groundswell in society. It doesn’t need to wait for an election to do that,” said Sumner. “If you’re trying to make a topic more salient in people’s minds – whatever the topic is – you don’t generally need to wait for an election cycle to begin generating an outrage for a particular topic,” he said.

Read more over on Facebook: An Update On Information Operations On Facebook | Facebook Newsroom