In-brief: Will artificial intelligence and machine learning assume the work now done by information security pros? Yes, and no.

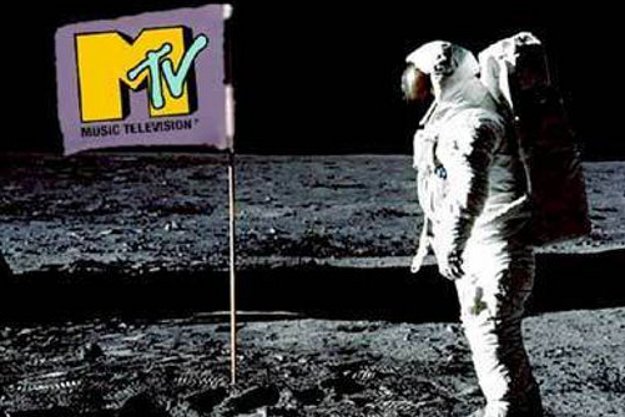

The Buggles’ 1979 song “Video Killed the Radio Star” was prescient in talking about the growing importance of video and technology, while worrying about the changes it would bring. So prescient, in fact, that when MTV first went on air two years later, it chose that song as its first offering – a choice that truly would be seen as prophetic, given the network’s meteoric rise during the 80s and 90s.

These days, I think its possible to peer into the near future and make a similar type of prediction – which is really just an observation – about the role of artificial intelligence, machine learning and other advancements in the information security space. In fact, it’s the subject of a recent piece I wrote that looks at how investments in artificial intelligence, machine learning, expert systems and the like are remaking the information security profession.

In short: the chronic undersupply of information security talent, coupled with the increasing frequency of cyber incidents has both investors and entrepreneurs looking for ways to offload work that used to be performed by human operators onto machines.

From the article:

Much of the investment that’s going into the cybersecurity space to fuel the development of automation is directed at responding to cybersecurity incidents. Currently, humans are the ones who figure out how to respond to cyberattacks on networks, working to quickly block suspicious communications and analyze malicious behavior and software. But computers could perform the same functions — and do it much more quickly than people behind the keyboard….

In fact, the allure of machines quickly fixing vulnerabilities has led the Defense Advanced Research Projects Agency (DARPA), the Defense Department’s technology lab, to organize the first-ever hacking competition that pits automated supercomputers against each other at next month’s Black Hat cybersecurity conference in Las Vegas.

With the contest, DARPA is aiming to find new ways to quickly identify and eliminate software flaws that can be exploited by hackers, says DARPA program manager Mike Walker.

“We want to build autonomous systems that can arrive at their own insights, do their own analysis, make their own risk equity decisions of when to patch and how to manage that process,” said Walker.

Also worth noting: IBM’s plans to train a new, cloud-based version of its Watson cognitive technology to detect cyberattacks and computer crimes. As part of its training, IBM fed Watson a dictionary of information security-specific terms such as “exploit” and “dropper” and programmed it how to identify and respond to cybersecurity incidents.

But will computers armed with “deep learning” algorithms really obviate humans? Most of the experts I spoke with said “no” – at least not in the near term.

Typical was the opinion of Cody Pierce, The Director of Vulnerability Research at the security firm Endgame, who noted the “hype” surrounding AI and cyber. “Artificial intelligence has a lot of potential highlighting things for operators,” he said. Using machine learning to “automate menial tasks” could yield far better results than would be possible with human operators, he said. And that, in turn, could “augment” less trained security analysts to do “powerful things,” Pierce said.

At the higher levels of the information security chain of responsibility, however, Pierce doesn’t see machines supplanting people. “(Artificial intelligence) will never replace the Tier 3 advanced expert,” he said. “It’s just a way to focus hunters on what’s bad and let them do their thing.”

That was also the opinion of John Pescatore, the Director of Emerging Security Trends at The SANS Institute. He saw artificial intelligence and machine learning as “force multipliers” that could help a lot in prioritizing the work of humans, rather than replacing them altogether.

True? Possibly. Possibly not. The analyst firm McKinsey & Company recently released a report entitled (tantalizingly) “where machines could replace humans – and where they can’t (yet).” While the report didn’t single out information security work, it did identify data collection and data processing as two tasks that are the most susceptible to being automated.

Without a doubt, those two tasks are a big part of the current information security workload. For example, IBM has reported that the average organization is presented with more than 200,000 “pieces of security event data” each day. Responding to “false positives” in that data is a huge and costly problem for organizations of all types.

Sure, computers right now don’t have the ability to understand and analyze complex, malicious cyber operations. However, refinements in artificial intelligence sometimes referred to as “deep learning” are giving machines the ability to mimic the kind of human intuition that allows top-level analysts to detect such campaigns.

After all: changes in the landscape of online risk are constant. We’re hearing a lot about ransomware attacks these days, because ransomware has become a profit center for cyber criminals and vexing for organizations in healthcare, manufacturing, the public sector and more. But its useful to remember that three years ago, the problem everybody was focused on was targeted, “advanced and persistent” threats. Five years ago it was bonnets and denial of service attacks. Ten years ago it was spam. Fifteen years ago, it was polymorphic worms like Blaster and Sobig.

In other words threats – and our awareness of them- are always changing, but they’re also on a continuum. APTs didn’t go away. They’re still a problem. Heck, Conficker is still quite common. We just stopped talking about it. As the pace of technology and its adoption quicken, so too the threats associated with it. It seems to make sense that we’ll be looking to machines to help us deal with the problems that our reliance on machines bring about.

In a best case scenario, user can experience a GPRS data

rate of approximately 170 kbps per network radio channel.

A good example of the two approach radio idea is the cell phone and the hand-held radio referred

to as the walkie talkie. Beofang radio He is scheduled to become a keynote speaker on Everett Washington’s radio station, KRKO 1380 am on April 25 ”Health

Matters,” starting at 7:00pm.

One Direction performed on the BBC Radio 1 Teen Awards alongside Katy Perry, JLS and The Wanted last year.

Baofeng radio website There is not any doubt how the light is

expandable for max brighness, small for easy portability,

and has efficient dual power source system. In town, many girls like Augusta help their mother

in household chores.