It has become obvious (to me, anyway) that spam, phishing, and malicious software are not going away. Rather, their evolution (e.g. phishing-to-spear phishing) has made it easier to penetrate business networks and increase the precision of such attacks.

Yet we still apply the same basic technology such as bayesian spam filters and blacklists to keep the human at the keyboard from unintentionally letting these miscreants onto our networks.

Ten years ago, as spam and phishing were exploding, the information security industry offered multiple solutions to this hard problem. A decade later, the solutions remain: SPF (Sender Policy Framework), DKIM (Domain Keys Identified Mail) and DMARC (Domain-based Message Authentication, Reporting & Conformance). Still: we find ourselves still behind the threat, rather than ahead of it. Do we have the right perspective on this? I wonder.

The question commonly today is: “How do we identify the lie?” But as machine learning and data science become the new norm, I’m not convinced that this is the right question. Asking ‘how do we identify the lie’ has led us to focus on identifying spammy or phishy email based on content and other tell-tale properties.

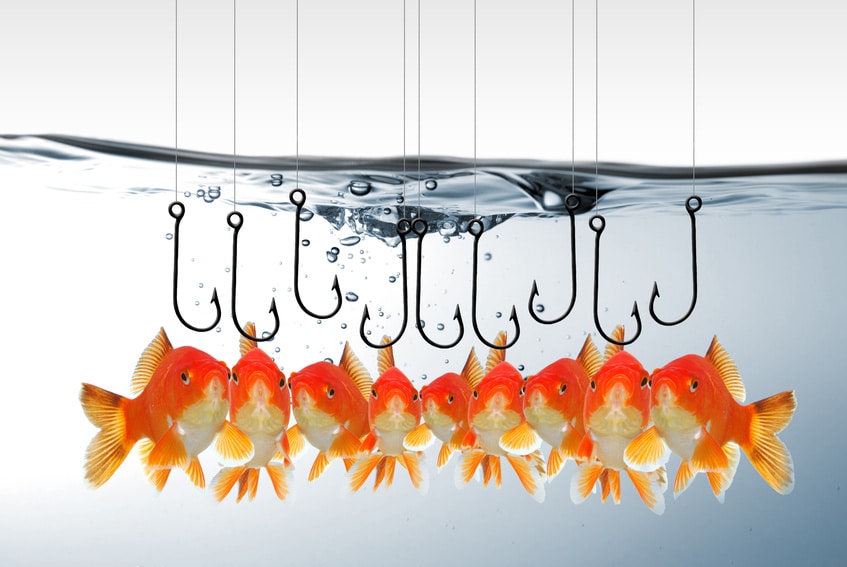

Technology does this to decent effect, but email servers are still getting slammed with spam. Unsolicited email is sent by the millions or hundreds of millions of messages and usually poses as legitimate email so it can get to your inbox. We’ve seen this with brand-based phishing such as fake bank emails of well-known financial institutions, and we have seen spoofed email spams drop malware onto networks quite easily.

[Read more Security Ledger coverage of malware and Internet threats here.]

In business, email is the most commonly used service and is a vital communications medium within today’s high-speed business culture. But what if the question wasn’t “how do we identify a lie,” but “how do we identify the truth?” When we look at human psychological behavior more broadly, our brain determines familiarity and routine as it learns from experiences over time. Essentially a trust model is established around familiar in which people generally feel safe.

On the other hand, introducing unfamiliar situations or experiences triggers a different response characterized by heightened awareness, mistrust, defensiveness, fear, uncertainty and doubt. In the online context, brand spoofing such as fake bank sites distributed to a user’s inbox exploits familiarity or trust. The user only sees the content of the message. Most users have no understanding of the technology delivering their email, and even if so, they are busy focusing on running a business.

Behind the scenes the email headers describe the lie. Or, to use an analogy, Alice offering a verbal promissory transaction to Bob but Alice’s fingers are secretly crossed behind her back. Bob only hears the promise. But behind it is the lie which Bob will suffer from after it’s too late.

Scratch a lie, find a thief

What are we missing? The Truth! We react to the overt threats: the observables, the indicators, the adversary, the underground. This is increasingly the product of (premium) threat research. But in reading it, we lose the ability to think intuitively. Do we know ourselves (as the ancient Greek maxim advises)? Do we know who we trust?

When it comes to building threat models, our jobs have become overly complicated. But they shouldn’t be.

TRUST: Threat Reduction via Understanding Subjective Treatment

I propose building a trust model focused on what we know is good and familiar to the network and systems. This new model would start with the fundamentals:

- Information: What information are you dealing with, why, how, when, who and where is the information going?

- Secrets: Where are your secrets, including data, passwords, and encryption keys? How do you protect them?

- Actors: Who are the actors involved, bad and good?

This model will enhance the way we protect information and will reduce the requirements that carry heavy cost to the business— in processing, analyzing, and dissemination. It will (not incidentally) also offer improvements in scale and total cost of ownership. In application this model will reform online business reputation and eliminate deception from entering your network. Example use cases:

Brand-based phishing: By analyzing a large sample set of headers of legitimate bank-originated emails, note the similarities and train the model to identify the pattern of truth. By classifying information more effectively, such as classes of mail (personal – gmail, business class email – bankX.com) the deviation when compared to a phishing email of the bank’s brand will prove unfamiliar and illicit, due to a distinct deviation from the norm. Additionally digital signatures (such as DKIM) would finally be effective for this model since the key can be partly derived from the properties of familiarity that the original bank has proven. Essentially any incongruent behavior will be rejected.

File Attachment behavior within emails: By classifying email types such as “personal” and “business,” as well as other features like “new event,” “never before seen email,” and “recipient types” (HR Department will have different expectations and experiences) one could assist business email recipients in recognition of malicious attachments and discard them without much analysis. An example question would be (non-HR department): How often when one receives a first-time email contact in the workplace from a legitimate and reputable business class email will it contain attachments such as a PDF?

By performing measurements of this type of activity, the behavior could be considered in the TRUST model. Other possible points of interrogation:

- How many personal gmail accounts should be sending email claiming they are an online legitimate bank?

- How many non-government IP’s (or foreign, or personal) should be sending emails identifying themselves as the FBI (re: FBI nigerian scams)?

File behavior analysis: Docs and PDF’s can be baselined using fuzzy hashing, temporal, frequency and entropy analysis to determine and establish legitimate human modified documents by sampling human-originated documents. Exploits and malware found in documents will exceed standard human baseline and deviate significantly from the established trusted information. This can be performed without invading privacy of the incoming documents, and can be handy in detection of malware attaching to documents in a shared environment.

In conclusion:

Let’s stop repeating the mistakes of the past. Let’s pit unyielding truth against ever evasive deception to be ahead of threats, defend and maintain our gain while exploiting the opponent’s unexpected loss. Our victory will force the threat actor to be behind us for a change – that is the ultimate goal.